Advantages of Quantisweb in Production and Operations Environment

By William Blasius

“Voice of the Customer” Advisor to Quantisweb Technologies

A Quantisweb Technologies White Paper

January 2018

As a seasoned manufacturing professional, experienced in developing optimal production settings with acceptable tolerances, you understand the importance of using Design of Experiments methodology to avoid the trap of one factor at a time, OFAT, experimentation. You have seen it a hundred times over, where a co-worker decides he is only going to change one variable to get to an optimal solution quickly. That one variable turns into two, then three, then four. Without a solid plan, any chance of capturing interactions between the variables is squandered. All hope is eventually lost for the optimal solution and the data gathered is useless for plugging back into standard design of experiments software. Eventually, executives in Operations and Quality Management tire of waiting for the promised results or more likely, some other fire pops up from another non-optimized process and another OFAT starts with the hope for a quick fix. Life becomes an endless succession of fire-fighting exercises. In a counter-productive twist, even in companies dedicated to Lean Sigma, a culture evolves that celebrates those few OFAT successes as heroic when in fact, they are just kindling for the next fire.

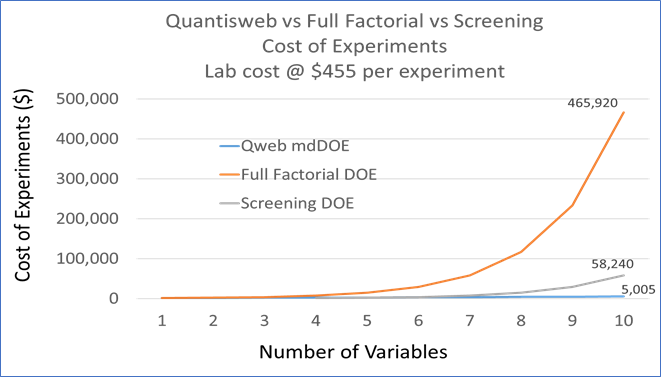

A significant step up from one factor at a time fiddling is standard design of experiments. DOE as we know it, has its foundations in the 1920’s and was a brilliant innovation that ushered in a new era of agricultural crop yield optimization that spread into the chemical industry in the 1950’s. The concepts that drive the rigid statistics behind DOE also become its limiting factor in a modern industrial manufacturing environment. The number of trials required to meet statistical rigor increases exponentially with the number of variables. One of the most useful aspects of DOE, response surface visualization analysis becomes merely conceptual beyond three variables forming a three-dimensional cube. The typical corporate response to the confusion created around all the DOE named design options, has been to deploy statisticians or black belts with only 6 weeks of training to help the experimenters. This assistance usually means forcing some trials into formulation or process zones that will fail for the sake of orthogonal symmetry. Given that three variables will lead to 8 trials, four variables will take 16 trials, five variables need 32 trials and six variables calls for 64 trials for a full factorial design, it is easy to see how quickly an experimenter’s best intentions at covering as many variables as possible can become overwhelming. Assuming a conservative average cost of $2,500 for a single shift ($350/hr. lost profit), small production scale trial, the costs run up from $20,000, $40,000, $80,000 to $160,000 for 3, 4, 5 and 6 variables at only two levels. Table 1 below provides a graft showing the efficiency of Quantisweb in relation to the number of trials considered. What can a manufacturing engineer do with an eight component formulation running through a twenty-variable process? Running 2 raised to the 28th power, or 268 million trials at a cost of $670 Billion, is out of the question. Screening designs, cutting that number in half or quarters or tenths, hardly makes a dent on the impossibility of investigating all the variables at the same time. Chunking the problem into manageable groups of three variables at two levels is a reasonable solution with the advantage of being able to tease out some potentially significant interactions. Someone will still need to decide that at least a few variables are insignificant and can be ignored based on gut feelings or rules of thumb with no guiding data. The risk inherent with chunking in production is that no chunk can be run until after a previous chunk has been run and analyzed. In a plant with limited slack, scheduling downtime can be a serious issue. Multiple chunks can stretch an optimization project out for a year or more. Therefore, most Lean Sigma, optimization and yield enhancement projects tend to be small and hit only a few major variables like throughput, RPM’s, single point temperature or residence time. Formulation and raw material variability is viewed as a luxury with no economically realistic way to integrate back into process variability. Standard DOE’s in a modern manufacturing environment keep engineers from taking a holistic, systematic approach to production/product optimization.

Table 1: Efficiency of Quantisweb chart.

Quantisweb Technologies has been helping production professionals with whole system product and process improvements. The heart of the Quantisweb software is an integrated optimization package that combines Design of Experiments with a weighted ranking system called the Analytical Hierarchy Process (AHP) and a statistical means of making choices in an environment of uncertainty known as Decision Theory (DT). This combination, powered by machine learning has allowed the development of a next generation design, analysis and optimization tool for manufacturing engineers to utilize in a variety of production environments.

Thanks to the ever-increasing computing power available, the Quantisweb DOE function has evolved from its turn of the 20th century orthogonal arrays (paired data point comparisons) origins to cutting edge stochastic approximation mathematics which is an iterative means for modeling extremely complex problems with multiple unknown parameters. This has become what is now known as the minimal dynamic Design of Experiments, mdDOE, platform. The software is configured to utilize up to 200 variables to optimize up to 100 outputs. In a standard DOE system, that would be 2 to the 200th power of trials which equals 1 with 60 zeros after. With Quantisweb, that number is reduced to the number of variables plus one, or 201 trials at the software’s current practical maximum. The number of required trials would be like a Taguchi or Plackett-Burman experimental design, except that these two methods only optimize one output at a time where Quantisweb optimizes all outputs at once. The big difference is that with stochastic approximation, trials can be clustered in areas that the engineer is most interested in. The software deals with uncertainty; you do not have to fill in all the blanks with certainties or certain failures for the sake of classical statistical rigor. From the beginning, engineers have as much control of the optimization process as they are comfortable with. After completing the trials, the AHP and Decision Theory components allow the engineer to take the behavioral laws generated from the trials and to optimize the inputs (formulation ingredients, process conditions) based on importance/desirability weightings assigned to the different outputs. The input variables can be numeric, named or conditional and in combinations. For example, conditional boundaries can be set up so that RPM’s must exceed A if Feed Rate is above B. Reduced to its simplest form, the software accommodates the way that creative engineers think rather than forcing engineers to think like statistical software.

A very important aspect of the Quantisweb methodology is the inherent agility built into the process. Most companies collect huge amounts of information on their process and gather certificates of analysis (COA) on raw materials from their suppliers. If that data can be traced forward to the resulting product quality measures, the data mining capability of the system can analyze the data within the normal variability of the process and inputs to weed out irrelevant variables and suggest the highest impact trials to either build behavior laws or to optimize whatever outputs that would have the most benefit for the business. If you are currently collecting that information to report Cpk or some other passive process capability number, you can put that same information to work on understanding how the inputs and process functions.

One particularly useful agility component comes from being able to focus on specific variables and variable interactions without having to start from a blank slate. With data mined information, rough models can be run to simulate the process and outputs to help you decide what amount of effort will give you the maximum return before you approach a production scheduler with your plan to take over a manufacturing train.

Once production optimization trials have been completed, further work can retain the base knowledge of the first round to increase data density and model accuracy. The Quantisweb methodology is not just iterative, it is cumulatively iterative. That is the true value of a machine learning system over a discrete paired comparison software product found built into standard DOE programs. The software may even be used to put some meaning to the OFAT or abandoned DOE chunked efforts of your coworkers.

In summary, Quantisweb Technologies has taken the experimental discipline developed by R.A. Fisher in the 1920’s and refined by Genichi Taguchi in the 1980’s and applied mathematical processes to that foundational work that are only now commercially practical because of the continued expansion of computing speed and power. Combining contemporary mathematics with Analytical Hierarchy Process and Decision Theory drives Quantisweb software that minimizes trial commitment and maximizes agility while delivering behavioral laws that build in robustness with each iteration. The Quantisweb Technologies’ goal is to help you develop and optimize your products and processes for quality and throughput as quickly and robustly as possible so you can maximize profitability and increase effective plant capacity without capital expenditures.